Misalignment Mosaic: not treating cooperation as a black box

Authors: Shayak Nandi and fernanda Eliott, 2025

Here is a link to our paper, and here is a podcast summarizing this work.

- Focus problem:

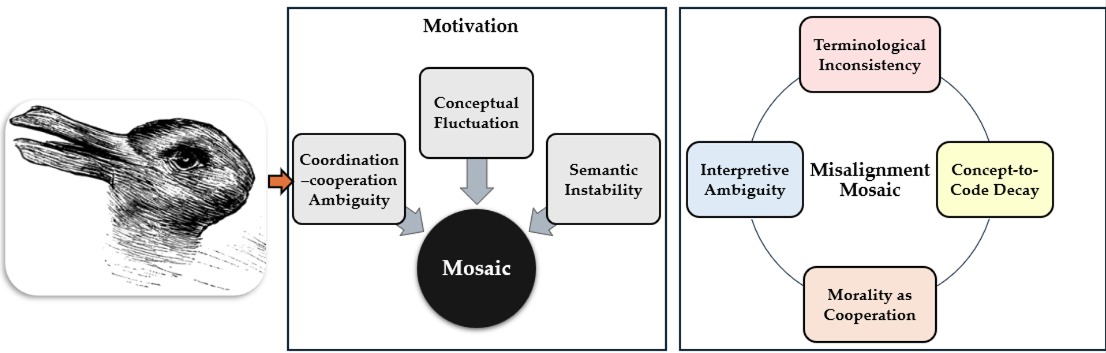

- We use “mosaic” to illustrate that misalignments in Multi-Agent Systems occur across shapes, scales, and contexts, collectively forming a complex interpretive heterogeneity.

- Thus, the Misalignment Mosaic is a framework for diagnosing how semantic and interpretive ambiguity migrate from language to system design and ultimately to evaluation failures. It covers four components:

- Terminological Inconsistency;

- Interpretive Ambiguity;

- Concept-to-Code Decay;

- Morality as Cooperation.

- Together, these components help trace how misalignment arises from linguistic, epistemic, and framing assumptions embedded in MAS.

2. Why does it matter?

- Semantic gaps between how agents behave and how their behavior is framed and morally interpreted remain structurally unaddressed in MAS, despite its growing relevance in public-facing systems.

- We also call attention to sacrificial cooperation, in which self-interest or local utility is actively relinquished for the benefit of others, with no guaranteed reciprocity, thereby introducing loss, risk, or asymmetry into agent behavior. Thus, does cooperation involve individual costs or risks without assured gain (sacrificial), or is it based on mutual benefit and rational strategy (strategic)?

- So what? Well, in human societies, sacrificial cooperation (where individuals incur personal costs to uphold collective welfare, even in the absence of guaranteed reciprocity) is central to moral systems. This raises fundamental questions about how MAS systems interpret, implement, and project cooperation, especially when deployed in environments where human observers may expect morally grounded, sacrificial behaviors — even when agents are only executing coordinative, utility-maximizing functions.

3. Discussion:

- We identify three interconnected drivers of interpretive misalignment in MAS:

- Coordination/cooperation ambiguity: these terms are used interchangeably, blurring conceptual boundaries.

- Conceptual fluctuation: key terms like cooperation acquire shifting meanings across system architecture, agent behavior, evaluation, and observer framing.

- Semantic instability: the anchoring of key terms becomes fragile or inconsistent, allowing meaning to drift across subfields, implementations, or observer perspectives. These dynamics systematically enable normative overreadings, in which coordination can be misread as cooperation and cooperation as moral alignment, producing interpretive, design-level, evaluative, and ultimately ethical risks.

4. Weakness:

- Our analysis centers on the ambiguity between cooperation and coordination. While we argue that this ambiguity is foundational to many misalignment risks, other overloaded terms in MAS (such as autonomy, alignment, or trust) require parallel diagnostic attention.

- Future efforts should explore how semantic instability propagates across AI domains, particularly as MAS-like architectures underpin increasingly complex agentic systems.

5. Where used:

- We are currently testing the framework in our lab in two domains: on a multi-agent systems testbed and in a human-AI partnership.